Float Compression 0: Intro

I was playing around with compression of some floating point data, and decided to write up my findings. None of this will be any news for anyone who actually knows anything about compression, but eh, did that ever stop me from blogging? :)

Situation

Suppose we have some sort of “simulation” thing going on, that produces various data. And we need to take snapshots of it, i.e. save simulation state and later restore it. The amount of data is in the order of several hundred megabytes, and for one or another reason we’d want to compress it a bit.

Let’s look at a more concrete example. Simulation state that we’re saving consists of:

Water simulation. This is a 2048x2048 2D array, with four floats per array element (water height,

flow velocity X, velocity Y, pollution). If you’re a graphics programmer, think of it as a 2k square

texture with a float4 in each texel.

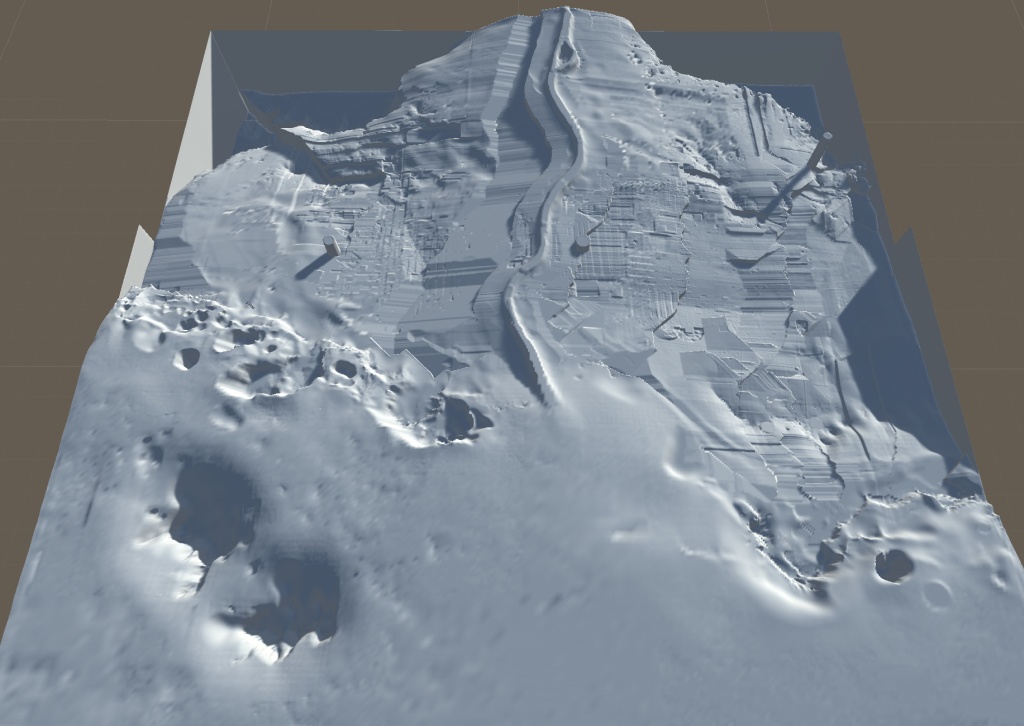

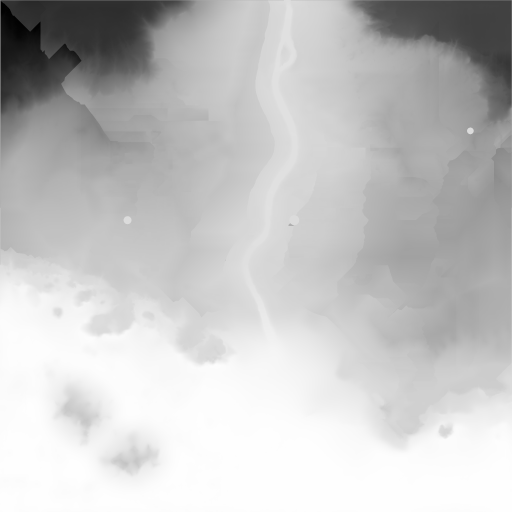

Here’s the data visualized, and the actual 64MB size raw data file (link):

Snow simulation. This is a 1024x1024 2D array, again with four floats per array element (snow amount, snow-in-water amount, ground height, and the last float is actually always zero).

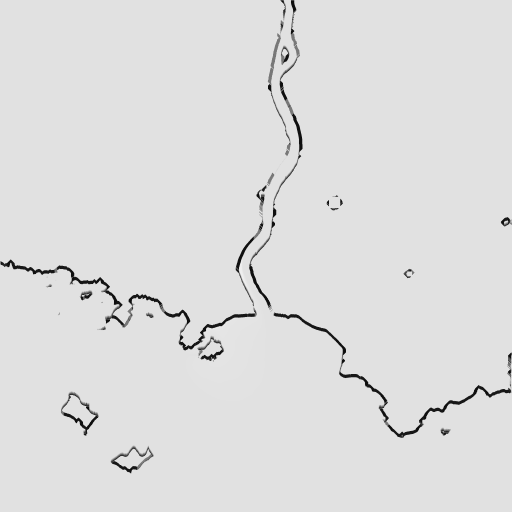

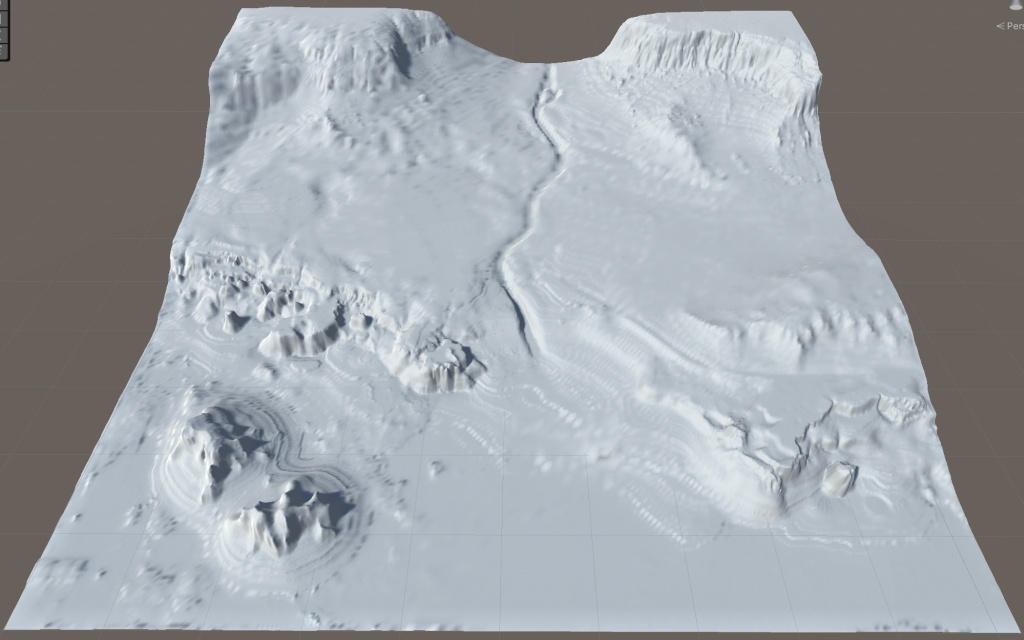

Here’s the data visualized, and the actual 16MB size raw data file (link):

A bunch of “other data” that is various float4s (mostly colors and rotation quaternions) and various float3s (mostly positions). Let’s assume these are not further structured in any way; we just have a big array of float4s (raw file, 3.55MB) and another array of float3s (raw file, 10.9MB).

Don’t ask me why the “water height” seems to be going the other direction as “snow ground height”, or why there’s a full float in each snow simulation data point that is not used at all. Also, in this particular test case, “snow-in-water” state is always zero too. 🤷

Anyway! So we do have 94.5MB worth of data that is all floating point numbers. Let’s have a go at making it a bit smaller.

Post Index

Similar to the toy path tracer series I did back in 2018, I generally have no idea what I’m doing. I’ve heard a thing or two about compression, but I’m not an expert by any means. I never wrote my own LZ compressor or a Huffman tree, for example. So the whole series is part exploration, part discovery; who knows where it will lead.

- Part 1: Generic Lossless Compression (zlib, lz4, zstd, brotli)

- Part 2: Generic Lossless Compression (libdeflate and Oodle entered the chat)

- Part 3: Data Filtering (simple data filtering to improve compression ratio)

- Part 4: Mesh Optimizer (mis-using mesh compression library on our data set)

- Part 5: Science! (zfp, fpzip, SPDP, ndzip, streamvbyte)

- Part 6: Optimize Filtering (optimizations for part 3 data filters)

- Part 7: More Filtering Optimization (better optimizations for part 3 data filters)

- Part 8: Blosc (testing Blosc compression library)

- Part 9: LZSSE and Lizard (lzsse8, lizard)