Font Rendering is Getting Interesting

Caveat: I know nothing about font rendering! But looking at the internets, it feels like things are getting interesting. I had exactly the same outsider impression watching some discussions unfold between Yann Collet, Fabian Giesen and Charles Bloom a few years ago – and out of that came rANS/tANS/FSE, and Oodle and Zstandard. Things were super exciting in compression world! My guess is that about “right now” things are getting exciting in font rendering world too.

Ye Olde CPU Font Rasterization

A true and tried method of rendering fonts is doing rasterization on the CPU, caching the result (of glyphs, glyph sequences, full words or at some other granularity) into bitmaps or textures, and then rendering them somewhere on the screen.

FreeType library for font parsing and rasterization has existed since “forever”, as well as operating system specific ways of rasterizing glyphs into bitmaps. Some parts of the hinting process have been patented, leading to “fonts on Linux look bad” impressions in the old days (my understanding is that all these expired around year 2010, so it’s all good now). And subpixel optimized rendering happened at some point too, which slightly complicates the whole thing. There’s a good overview of the whole thing in 2007 Texts Rasterization Exposures article by Maxim Shemanarev.

In addition to FreeType, these font libraries are worth looking into:

- stb_truetype.h – single file C library by Sean Barrett. Super easy to integrate! Article on how the innards of the rasterizer work is here.

- font-rs – fast font renderer by Raph Levien, written in Rust \o/, and an article describing some aspects of it. Not sure how “production ready” it is though.

But at the core the whole idea is still rasterizing glyphs into bitmaps at a specific point size and caching the result somehow.

Caching rasterized glyphs into bitmaps works well enough. If you don’t do a lot of different font sizes. Or very large font sizes. Or large amounts of glyphs (as happens in many non-Latin-like languages) coupled with different/large font sizes.

One bitmap for varying sizes? Signed distance fields!

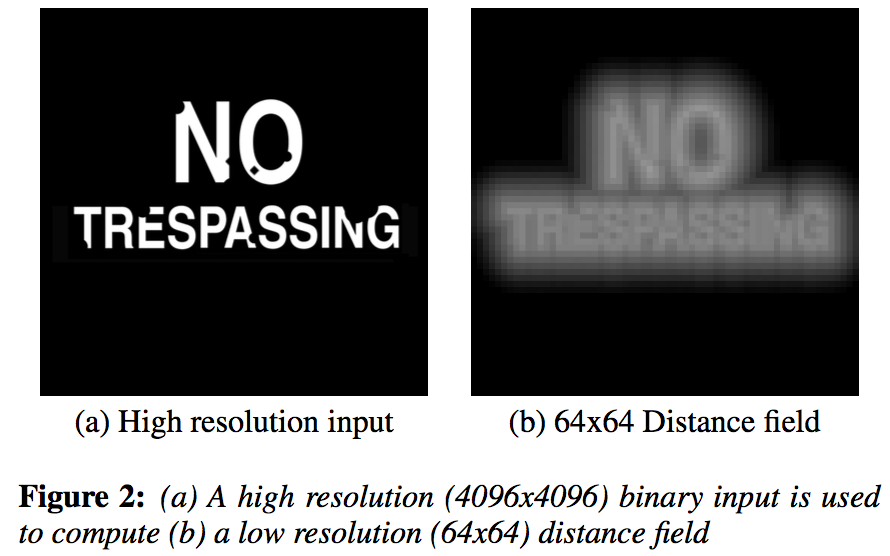

A 2007 paper from Chris Green, Improved Alpha-Tested Magnification for Vector Textures and Special Effects, introduced game development world to the concept of “signed distance field textures for vector-like stuffs”.

The paper was mostly about solving “signs and markings are hard in games” problem, and the idea is pretty clever. Instead of

storing rasterized shape in a texture, store a special texture where each pixel represents distance to the closest shape

edge. When rendering with that texture, a pixel shader can do simple alpha discard, or more complex treatments on the distance

value to get anti-aliasing, outlines, etc. The SDF texture can end up really small, and still be able to decently represent high

resolution line art. Nice!

The paper was mostly about solving “signs and markings are hard in games” problem, and the idea is pretty clever. Instead of

storing rasterized shape in a texture, store a special texture where each pixel represents distance to the closest shape

edge. When rendering with that texture, a pixel shader can do simple alpha discard, or more complex treatments on the distance

value to get anti-aliasing, outlines, etc. The SDF texture can end up really small, and still be able to decently represent high

resolution line art. Nice!

Then of course people realized that hey, the same approach could work for font rendering too! Suddenly, rendering smooth glyphs at super large font sizes does not mean “I just used up all my (V)RAM for the cached textures”; the cached SDFs of the glyphs can remain fairly small, while providing nice edges at large sizes.

Of course the SDF approach is not without some downsides:

Of course the SDF approach is not without some downsides:

- Computing the SDF is not trivially cheap. While for most western languages you could pre-cache all possible glyphs off-line into a SDF texture atlas, for other languages that’s not practical due to sheer amount of glyphs possible.

- Simple SDF has artifacts near more complex intersections or corners, since it only stores a single distance to closest edge.

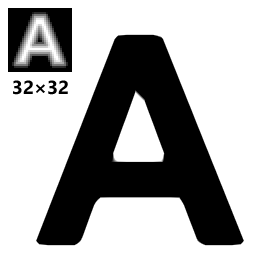

Look at the letter

Ahere, with a 32x32 SDF texture - outer corners are not sharp, and inner corners have artifacts. - SDF does not quite work at very small font sizes, for a similar reason. There it’s probably better to just rasterize the glyph into a regular bitmap.

Anyway, SDFs are a nice idea. For some examples or implementations, could look at libgdx or TextMeshPro.

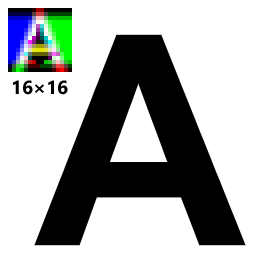

The original paper hinted at the idea of storing multiple distances to solve the SDF sharp corners problem, and a recent

implementation of that idea is “multi-channel distance field” by Viktor Chlumský which seems to be pretty nice:

msdfgen. See associated

thesis too.

Here’s letter

The original paper hinted at the idea of storing multiple distances to solve the SDF sharp corners problem, and a recent

implementation of that idea is “multi-channel distance field” by Viktor Chlumský which seems to be pretty nice:

msdfgen. See associated

thesis too.

Here’s letter A as a MSDF, at even smaller size than before – the corners are sharp now!

That is pretty good. I guess the “tiny font sizes” and “cost of computing the (M)SDF” can still be problems though.

Fonts directly on the GPU?

One obvious question is, “why do this caching into bitmaps at all? can’t the GPU just render the glyphs directly?” The question is good. The answer is not necessarily simple though ;)

GPUs are not ideally suited for doing vector rendering. They are mostly rasterizers, mostly deal with triangles, etc etc. Even something simple like “draw thick lines” is pretty hard (great post on that – Drawing Lines is Hard). For more involved “vector / curve rendering”, take a look at a random sampling of resources:

- Rendering Vector Art on the GPU by Charles Loop, Jim Blinn (of course!) (2007),

- Precise vector textures for real-time 3D rendering by Zhipei Qin, Michael Mccool, Craig Kaplan (2008),

- Random-access rendering of general vector graphics by Diego Nehab, Hugues Hoppe (2008),

- NVIDIA Path Rendering (2012),

- Efficient GPU Path Rendering Using Scanline Rasterization by Rui Li, Qiming Hou and Kun Zhou (2016).

That stuff is not easy! But of course that did not stop people from trying. Good!

Vector Textures

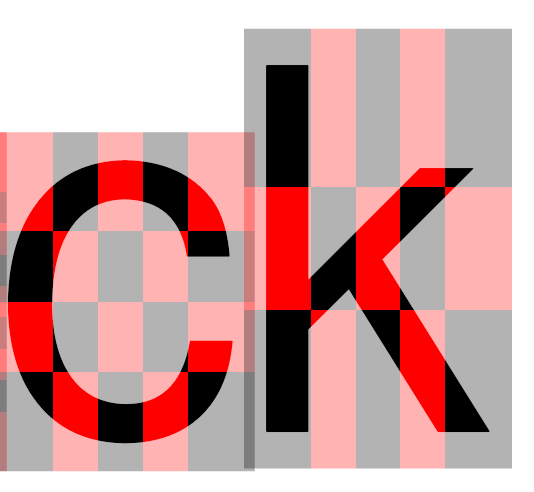

Here’s one approach, GPU text rendering with vector textures

by Will Dobbie - divides glyph area into rectangles, stores which curves intersect it, and evaluates coverage from said curves

in a pixel shader.

Here’s one approach, GPU text rendering with vector textures

by Will Dobbie - divides glyph area into rectangles, stores which curves intersect it, and evaluates coverage from said curves

in a pixel shader.

Pretty neat! However, seems that it does not solve “very small font sizes” problem (aliasing), has limit on glyph complexity (number of curve segments per cell) and has some robustness issues.

Glyphy

Another one is Glyphy, by Behdad Esfahbod (بهداد اسفهبد). There’s video and slides of the talk about it. Seems that it approximates Bézier curves with circular arcs, puts them into textures, stores indices of some closest arcs in a grid, and evaluates distance to them in a pixel shader. Kind of a blend between SDF approach and vector textures approach. Seems that it also suffers from robustness issues in some cases though.

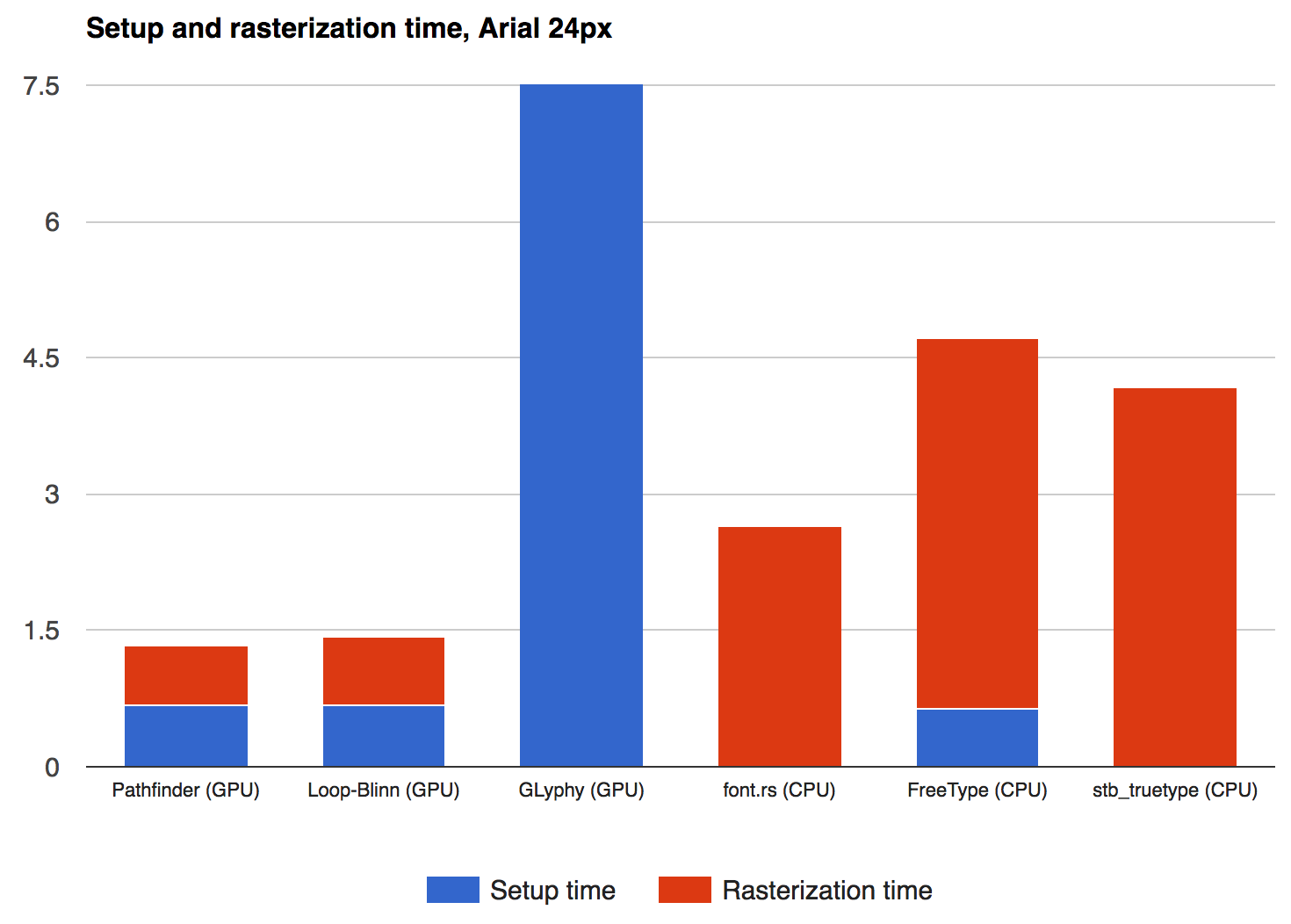

Pathfinder

A new one is Pathfinder, a Rust (again!) library by Patrick Walton. Nice

overview of it in this blog post.

A new one is Pathfinder, a Rust (again!) library by Patrick Walton. Nice

overview of it in this blog post.

This looks promising!

Downsides, from a quick look, is dependence on GPU features that some platforms (mobile…) might not have – tessellation / geometry shaders / compute shaders (not a problem on PC). Memory for the coverage buffer, and geometry complexity depending on the font curve complexity.

Hints at future on twitterverse

From game developers/middleware space, looks like Sean Barrett and Eric Lengyel are independently working on some sort of

GPU-powered font/glyph rasterization approaches, as seen by their tweets

(Sean’s and

Eric’s).

From game developers/middleware space, looks like Sean Barrett and Eric Lengyel are independently working on some sort of

GPU-powered font/glyph rasterization approaches, as seen by their tweets

(Sean’s and

Eric’s).

Can’t wait to see what they are cooking!

Did I say this is all very exciting? It totally is. Here’s to clever new approaches to font rendering happening in 2017!

Some figures in this post are taken from papers or pages I linked to above:

- SDF figure from Chris Green’s paper.

- A letter SDF and MSDF images from msdfgen github page.

- Vector textures illustration from vector textures demo.

- Pathfinder performance charts from pathfinder post.